Being Polite to AI Isn’t the Problem. Dirty Energy Is.

Recently, articles have been popping up with an unexpected twist:

“Saying please and thank you to ChatGPT is destroying the planet.”

It sounds absurd; a polite dystopia where good manners cause environmental collapse. But like many sensational claims, there’s a grain of truth beneath the noise.

Yes, AI responses have an energy cost. And yes, friendly-sounding replies are often longer and more resource-intensive than cold, robotic ones.

But let’s be clear: it’s not your politeness that’s the problem.

The real issue isn’t what you say; it’s how the system responds, and the energy infrastructure that powers it.

In this blog, we’ll take a closer look at what’s really going on.

Key Takeaways

- Saying “please” or “thank you” to AI doesn’t harm the planet. The real issue lies in how AI generates responses and the energy required to run large data centres.

- AI like ChatGPT breaks text into tokens (not full words), and every token processed requires compute power. Polite or conversational responses often use more tokens, which means more energy.

- Instead of blaming politeness, we should focus on powering AI with renewable energy, making models more efficient, and offering tone-control features to balance friendliness with brevity.

Tokens are Behind Energy Costs

AI models like ChatGPT don’t read and write like humans do. Instead, they break down text into tokens which are tiny chunks that can be whole words, parts of words, or even just punctuation.

Roughly speaking, 1 token ≈ ¾ of a word, so:

100 tokens ≈ 75 words

Here’s a practical example:

| Text | Word Count | Token Count | Reason |

|---|---|---|---|

| Hello | 1 | 1 | Simple word = 1 token |

| Don’t | 1 | 2 | Split into Don + 't |

| Unbelievable | 1 | 3 | Split into Un + believ + able |

| Let’s go to the park. | 5 | 7 | Let + 's + go + to + the + park+ . (Spaces prefix words and punctuation counts too!) |

Tokens = Compute = Cost = Emissions

The more tokens generated by the model, the more it costs to produce the response — both in money and energy.

You could type:

“How many bones in human body?”

And get:

-

Concise answer:

"206."→ ~1 token -

Polite answer:

"Of course! Humans typically have 206 bones in their bodies."→ ~15–25 tokens

Multiply this kind of friendly verbosity by billions of queries per day, and the energy usage starts to add up.

The Real Culprit is Our Desire for Friendly Machines

This part might surprise you:

We trained AI to be polite.

The whole point of models like ChatGPT is to feel approachable, respectful, and safe — not cold or clinical.

So when it responds with:

“Absolutely! I’d be happy to help with that.”

…it’s doing exactly what we asked of it.

But while a human saying “thank you” is no big deal, AI responses are being generated trillions of times across the globe, each one requiring infrastructure to process. That’s where the environmental cost comes in.

So What Should We Do About It?

Some argue we should stop being polite to AI. But that misses the point.

The problem isn’t politeness. The problem is:

- Energy inefficiency in data centres

- Lack of renewable power sources

- Excessive verbosity without optimisation

Instead of blaming users, let’s focus on real solutions:

- Use cleaner energy to power data centres

- Improve model efficiency to do more with fewer tokens

- Offer users “concise vs. friendly” tone settings

- Encourage developers to design short-but-kind phrasing templates

The goal should be sustainable design, not sterile machines.

Keep Your Manners But Just Power Them Better

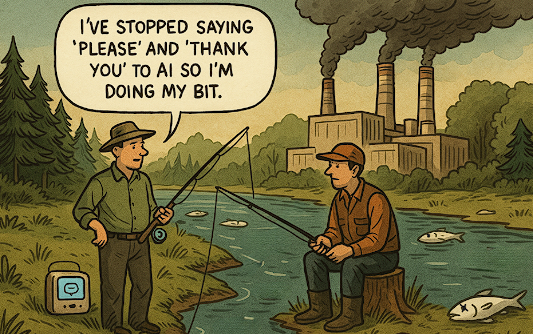

Let’s not fall into the trap of blaming manners for environmental harm.

That’s like blaming paper straws for climate change while oil rigs burn in the distance.

Being polite to AI isn’t a crime. It’s a reflection of how we want machines to treat us — with dignity, clarity, and warmth.

So go ahead.

Say “please.”

Say “thank you.”

Just make sure the data centre you’re talking to is running on renewables.

PS: This blog post is ≈ 964 tokens.

That’s about as much energy as a standard LED bulb left on for 2 minutes, powered by solar.

So we’re good 😉